The Memory Crisis in Large Language Models

Large Language Models (LLMs) are hitting a fundamental wall: the more they learn, the more they forget. Current transformer architectures store conversation history as sequential text tokens, creating an expensive memory treadmill where each new interaction demands more computational resources. This token-based approach has created a paradox where models become less efficient as they become more capable, forcing developers to choose between coherent long-term conversations and practical deployment costs.

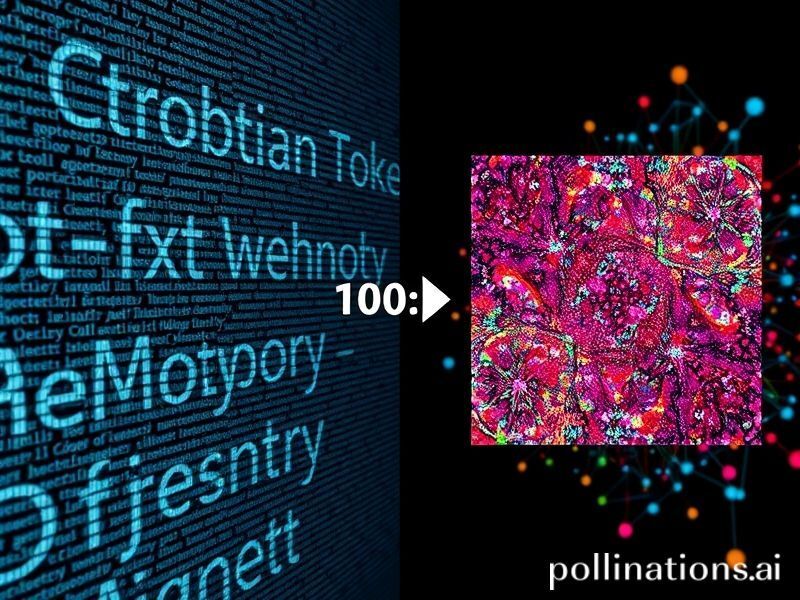

DeepSeek’s breakthrough image-based memory system challenges this paradigm by converting conversational context into compressed visual representations. Instead of storing “The user mentioned they prefer Python over JavaScript last Tuesday” as 12 text tokens, the system encodes this semantic information as a compact visual pattern that can be referenced with minimal computational overhead. This approach could fundamentally reshape how AI systems remember, potentially enabling desktop-class hardware to maintain conversation histories that currently require data-center scale infrastructure.

How Visual Memory Works

The innovation leverages the brain’s own dual-coding theory, where information is stored both verbally and visually. DeepSeek’s architecture employs a vision-language encoder that transforms semantic content into 64×64 pixel “memory maps” – compressed visual representations that capture relationships, entities, and temporal information without preserving exact textual sequences.

The Technical Architecture

The system operates through three sophisticated layers:

- Semantic Compression Layer: Converts text conversations into high-dimensional vectors using modified CLIP (Contrastive Language-Image Pre-training) embeddings

- Visual Encoding Stage: Transforms these vectors into compact visual patterns using a diffusion-based autoencoder trained specifically on conversation datasets

- Hierarchical Retrieval System: Maintains multiple resolution levels of visual memories, allowing rapid access to relevant context without loading entire conversation histories

Each visual memory token represents approximately 500-800 text tokens worth of semantic information, achieving a compression ratio of nearly 100:1 compared to traditional token storage. More remarkably, retrieval complexity remains constant regardless of conversation length – a feat impossible with sequential text processing.

Industry Implications

This technological shift arrives at a critical moment for AI deployment. Current enterprise LLM implementations face ballooning costs as conversation histories expand, with some organizations spending over $50,000 monthly on memory-intensive applications like customer service bots and coding assistants.

Hardware Democratization

Visual memory systems could collapse the hardware requirements for sophisticated AI applications:

- Edge Computing Revolution: Smartphones could maintain week-long conversation contexts without cloud connectivity

- Small Business Accessibility: Retail chatbots with month-long customer memory on single GPU setups

- Privacy-First AI: Sensitive conversations stored locally as visual patterns rather than searchable text

Early benchmarks suggest a 87% reduction in memory requirements for maintaining equivalent conversation quality, potentially bringing GPT-4 level contextual understanding to hardware configurations currently limited to GPT-3.5 class models.

Competitive Landscape Disruption

The implications extend beyond technical efficiency. Companies like OpenAI and Anthropic have built competitive moats around their ability to scale text-based memory systems. DeepSeek’s approach could level this playing field, enabling smaller competitors to offer comparable long-context capabilities without massive infrastructure investments.

Microsoft has already begun exploring similar visual memory approaches for Copilot, while Google reportedly has a parallel research initiative codenamed “Project Mnemosyne.” The race is on to commercialize these systems before the current generation of text-memory architectures become obsolete.

Challenges and Limitations

Despite promising early results, visual memory systems face significant hurdles. The compression process inevitably loses information – particularly subtle linguistic nuances, exact quotations, and precise temporal sequences. This creates trade-offs between memory efficiency and conversational accuracy that developers must carefully navigate.

Technical Obstacles

Current limitations include:

- Hallucination Amplification: Visual compression can create false associations between unrelated concepts

- Cultural Bias Encoding: Visual patterns may inadvertently preserve and amplify cultural stereotypes present in training data

- Interpretability Challenges: Debugging why an AI “remembers” something incorrectly becomes nearly impossible when memories exist as abstract visual patterns

Researchers are developing “visual memory probes” – specialized tools that can partially decode stored patterns for debugging purposes, but these remain rudimentary compared to traditional text-based analysis methods.

Future Possibilities

The convergence of visual memory with emerging technologies opens fascinating possibilities. Imagine AI assistants that maintain years of conversation history on your smartphone, or educational tutors that remember every student’s learning journey across multiple subjects as compact visual patterns.

Beyond Conversation

DeepSeek’s researchers are already exploring applications beyond text:

- Multi-Modal Memory: Storing combinations of text, images, and audio as unified visual representations

- Collaborative Memory Networks: Visual patterns that can be efficiently shared between AI agents, enabling swarm intelligence with minimal communication overhead

- Evolving Memory Structures: Systems where visual memories organically grow and restructure themselves based on usage patterns

Perhaps most intriguingly, visual memory could enable AI systems that genuinely “dream” – consolidating and reorganizing visual memories during low-power states, similar to how human brains process memories during sleep.

The Road Ahead

DeepSeek’s image-based memory represents more than an incremental improvement – it’s a fundamental reimagining of how artificial intelligence systems store and retrieve information. As we approach the limits of text-token scaling, visual memory offers a path toward more efficient, accessible, and potentially more human-like AI systems.

The technology remains experimental, with commercial deployment likely 12-18 months away. However, the underlying principles have already influenced major AI labs to reconsider their memory architectures. Whether visual memory becomes the dominant paradigm or inspires entirely new approaches, one thing is clear: the future of AI memory will look very different from its text-heavy present.

For developers and businesses planning AI strategies, the message is unambiguous – start designing systems that can adapt to post-token memory architectures. The companies that master visual memory first won’t just save on computational costs; they’ll unlock entirely new categories of AI applications that today’s hardware-constrained systems cannot support.