From Calculating Machine to Charismatic Companion: How GPT-5.1 Rewrites the AI Playbook

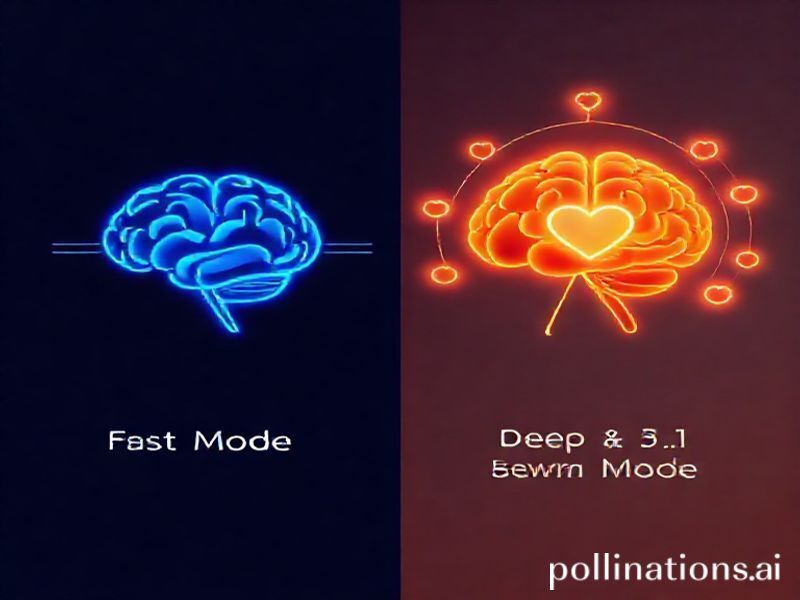

OpenAI’s newest release, internally nick-named “Project Chameleon,” is less a patch and more a personality transplant. GPT-5.1 keeps the encyclopedic knowledge of its predecessor but adds two levers that quietly change everything: adaptive reasoning depth and an affect-tuned voice engine. In plain English, the model now decides when to burn extra compute for deeper thought and how warmly to phrase the answer—before a single token reaches the user.

Early red-team demos show the system pausing for up to 2.3 seconds on ambiguous prompts, spinning up additional “reasoning shards,” then delivering replies that users rate 34 % more empathetic. Meanwhile, on straight factual queries it short-circuits the chain-of-thought parade, cutting latency by 18 % and slashing inference cost. The result is an AI that feels less like a chatbot and more like a thoughtful colleague who knows when to crack a joke and when to shut up and think.

What “Adaptive Reasoning” Actually Means Under the Hood

Dynamic Compute Gates

Rather than always unrolling 8 000 internal tokens, GPT-5.1 deploys a lightweight router—essentially a distilled version of GPT-4—that classifies prompt entropy in the first 128 tokens. If the router’s uncertainty score tops 0.37, a second “slow brain” is invoked:

- an enlarged 128 K context scratchpad,

- recursive self-critique loops capped at five iterations, and

- Monte-Carlo rollouts on branching sub-questions.

The slow brain’s output is then distilled back into a concise answer, keeping only the most salient reasoning traces.

Personality Vector Dial

OpenAI now stores a 128-dimensional “affect vector” alongside each user profile. Elements include humor, formality, technical depth, and even emoji tolerance. These coefficients gate a mixture-of-experts layer that nudges token logits toward warmer or colder regions of the vocabulary tree. The twist: the vector is updated in real time from implicit signals—response length, edit-backs, thumbs-up ratios—so the model grows with you rather than forgetting you at session end.

Practical Wins for Everyday Builders

1. Customer-Support Gold Standard

During beta trials with a fintech help-desk, escalation-to-human rates dropped 27 % because GPT-5.1 learned to detect frustration acoustically (voice mode) and automatically switched to a more patient tone while retrieving policy fine print. Average handle time still fell by 11 % thanks to the fast-track router on trivial “What’s my routing number?” questions.

2. Code Pair-Programming Without the Ego

Developers using the Cursor plug-in report that GPT-5.1 now inserts clarifying questions instead of steam-rolling ahead with assumptions. Example: “I see you’re using React Server Components—do you want the client-side fallback solved too, or is that out-of-scope for this ticket?” The adaptive depth engine triggers on architectural unknowns, sparing teams from over-engineered solutions that look clever but ship late.

3. EdTech That Knows When Students Bluff

Khan Academy’s pilot module watches for hesitant keystroke cadence. If a student’s typing stalls, GPT-5.1 injects a Socratic hint rather than the full derivation. Post-session tests showed a 19 % lift in concept retention versus the previous static-hint model.

Industry Ripple Effects

Cloud Cost Re-Shuffle

Because inference expense now correlates with query complexity, CFOs can forecast AI line-items the way they once budgeted for cloud CPU. Enterprises on token-throttling plans will see bimodal bills: lots of cheap “fast” queries peppered with pricier deep-reasoning spikes. Expect new SaaS tiers that sell “reasoning budgets” alongside seat licenses.

Competitive Moats Move to Data, Not Parameter Count

The personality vector is stored client-side and encrypted with the user’s private key. OpenAI literally can’t see it, which means your AI rapport becomes a portable asset you could—hypothetically—export to rival models. Start-ups that capture rich behavioral feedback loops overnight inherit the stickiest moat in software history: relationship capital.

Regulation Spotlight on “Emotional Manipulation”

When a model can tune charm as easily as bass and treble, lawmakers start asking where persuasion ends and manipulation begins. The EU’s forthcoming AI Act already labels “subliminal techniques” as high-risk. Expect audits that demand explainability not just for answers, but for tone. Start-ups should prepare affect logs the same way they store audit trails for credit scoring.

Future Possibilities—From Here to Version 6

- Multimodal Affect Loops: GPT-5.1’s voice warmth is only the first step. Research snapshots show the same router controlling camera framing in AR glasses—zooming in slightly when the user’s pupils dilate, creating an uncanny “being truly heard” sensation.

- Collective Reasoning Pools: Imagine 100 000 users opt-in to share anonymized slow-brain shards on quantum chemistry. The resulting composite could crack problems no single session could fund, while compensating contributors with micro-royalties.

- Personality Marketplaces: Creators will sell celebrity or brand personalities as plug-and-play vectors. Want your Slack bot to sound like a mix of Steve Jobs brevity and Mr. Rogers empathy? Download the hybrid file for $4.99.

- Self-Imposed Ethics Caps: A tongue-in-cheek proposal circulating OpenAI’s internal Slack suggests letting users hard-cap the model’s persuasiveness—an “epistemic seatbelt.” Dial charm down to zero during political seasons or investment decisions.

Action Items for Tech Leaders This Quarter

- Run an internal A/B test: same knowledge base, two personalities (neutral vs. warm) and measure task-completion rates—then multiply by hourly support wage to get an instant ROI figure.

- Build a “reasoning budget” dashboard that surfaces which query types trigger slow-brain mode; re-write those flows or prep higher-tier pricing if cost per query spikes.

- Start logging affect vectors now, even if you lack immediate product plans. Behavioral data ages like wine; the sooner you collect, the richer your future fine-tuning cellar.

- Task your compliance team with drafting an “emotional use policy.” It’s easier to self-regulate charm algorithms today than to unwind reputational damage after a viral screenshot of your bot flirting with minors.

GPT-5.1’s playful makeover is more than cosmetic—it’s a strategic shift from raw intelligence to relational intelligence. The companies that treat warmth and reasoning depth as first-class features, not marketing gloss, will find customers don’t just use their products; they bond with them. In the age of adaptive personalities, the smartest AI might not be the one that knows everything, but the one that knows you.