The Heat Revolution: How Thermodynamic Computing Could Transform AI Forever

In the sprawling data centers that power our digital world, a silent crisis is brewing. As artificial intelligence models grow exponentially more powerful, their hunger for electricity threatens to overwhelm our power grids. But what if the solution isn’t finding more energy—but using the energy that’s already there, hiding in plain sight as heat?

Enter Extropic, a bold startup that’s rewriting the rules of computation itself. Their revolutionary approach? Thermodynamic computing that harnesses thermal noise—the random jiggling of atoms at room temperature—as the primary computational resource. It’s a paradigm shift that could slash data-center power consumption by orders of magnitude while opening new frontiers in AI processing.

The Physics of Computation: A New Paradigm

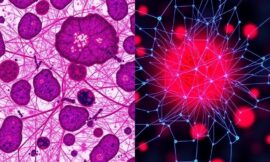

Traditional computing treats heat as the enemy—wasted energy that must be removed through expensive cooling systems. Extropic’s founders, drawing inspiration from biological neurons that operate efficiently at body temperature, asked a radical question: What if heat itself could do the computing?

Their thermodynamic processors exploit the natural fluctuations of electrons in semiconductor materials. Rather than fighting thermal noise, these chips embrace it, using the probabilistic behavior of heated particles to perform calculations. It’s computing that literally runs on entropy—the universal tendency toward disorder that physicists have long considered an unavoidable byproduct of energy use.

From Bug to Feature: The Science Behind Thermal Computing

At the heart of Extropic’s technology lies a profound insight: many AI algorithms, particularly those used in probabilistic machine learning, don’t require deterministic precision. Neural networks, Bayesian inference, and sampling algorithms can work beautifully with inherently stochastic components.

Their prototype devices use specialized thermodynamic units that can be thought of as “temperature transistors.” These components modulate local thermal fluctuations to encode information, performing probabilistic operations directly in the physics of heat flow. Early simulations suggest these units could operate at 1,000 times lower energy per operation compared to traditional CMOS transistors for certain AI workloads.

Industry Implications: A Data Center Revolution

The implications for the AI industry are staggering. Current large language models require megawatts of power for training and operation. GPT-4’s training reportedly consumed enough electricity to power thousands of homes for months. As models scale toward artificial general intelligence, energy demands threaten to become economically and environmentally unsustainable.

Extropic’s technology could reverse this trajectory. Consider these potential transformations:

- Edge AI Revolution: Thermodynamic processors could enable sophisticated AI in devices where power is severely limited—think smart sensors that run neural networks for years on a coin battery

- Cloud Cost Collapse: Data centers might reduce their energy consumption by 90% or more, making AI computation virtually free compared to current costs

- Climate Impact: The AI industry’s carbon footprint, projected to exceed that of aviation by 2030, could be dramatically curtailed

- New Architectures: Algorithms designed specifically for thermodynamic computing could unlock capabilities impossible with deterministic hardware

The Biological Blueprint

What’s particularly intriguing is how closely Extropic’s approach mirrors biological computation. The human brain operates on just 20 watts of power—less than a light bulb—while outperforming supercomputers in many tasks. Neurons communicate through noisy, probabilistic spike trains that make efficient use of thermal energy at the molecular level.

By embracing rather than suppressing thermal noise, thermodynamic computing could finally bridge the efficiency gap between silicon and biology. This isn’t just about saving energy—it’s about accessing entirely new computational paradigms that blur the line between hardware and algorithm.

Challenges and Skepticism

Of course, revolutionizing an $800 billion semiconductor industry isn’t without obstacles. Critics point to significant technical hurdles:

- Temperature Sensitivity: Precise control of thermal fluctuations across large-scale integrated circuits remains unproven

- Manufacturing Compatibility: New fabrication processes would require massive capital investment

- Algorithm Adaptation: Most existing software would need fundamental redesign to leverage probabilistic hardware

- Error Correction: Without traditional digital error correction, ensuring reliable computation poses theoretical challenges

Extropic acknowledges these concerns but remains optimistic. They’ve demonstrated proof-of-concept devices operating at room temperature and are working with major semiconductor foundries to develop compatible manufacturing processes. Their recent $50 million Series A funding round, led by prominent venture capital firms, suggests serious investor confidence.

The Road Ahead: From Laboratory to Market

Extropic’s roadmap calls for thermodynamic co-processors that can accelerate specific AI workloads within the next three years. These hybrid chips would combine traditional digital circuits with thermodynamic units, allowing gradual adoption without requiring complete system redesigns.

Looking further ahead, the company envisions fully thermodynamic supercomputers that operate at the theoretical limits of energy efficiency. These machines could make today’s most powerful AI clusters look like abacuses in comparison, potentially enabling:

- Real-time training of models with trillions of parameters

- Ubiquitous AI in every device, no matter how power-constrained

- New forms of probabilistic artificial intelligence that think more like biological brains

- Computing infrastructure that actually reduces global energy consumption as it scales

A Thermodynamic Future

The audacity of Extropic’s vision cannot be overstated. They’re not just proposing a new chip architecture—they’re suggesting we rethink the fundamental relationship between computation and energy. In a world increasingly defined by AI capabilities, the company that controls the most efficient computing platform could reshape entire industries.

Whether Extropic succeeds or fails, their work highlights an essential truth: the future of AI may not lie in building bigger, power-hungry machines, but in discovering entirely new ways to compute. As we stand at the threshold of an AI-powered future, thermodynamic computing offers a path where artificial intelligence enhances rather than threatens our planet’s resources.

The heat that once signaled computational waste might soon power the very intelligence that solves our greatest challenges. In the dance between order and chaos, between energy and information, the next revolution in AI is already warming up—one thermal fluctuation at a time.